The Turn Detection Trap: When 100% Accuracy is Wrong

Turn detection is the subtle art of knowing when a user has finished speaking. In a voice bot, getting this wrong leads to two painful failure modes:

- The Interruption: The bot cuts you off while you're pausing to check your credit card number.

- The Awkward Silence: You finish speaking, and the bot stares blankly for three seconds before replying.

Most systems rely on a standard Voice Activity Detector (VAD) or a general-purpose language model. We wanted to answer a specific question: "If we keep the architecture and latency target fixed (MobileBERT, less than 50ms) and only change the training corpus from 'general conversations' to 'call-center transcripts', how much accuracy do we gain?"

We ran the experiment. We got the results. And for a brief, shining moment, we thought we had solved turn detection forever.

We achieved 100% accuracy.

Then we looked closer.

Phase 1: The "Perfect" Model

We trained four different model variants based on MobileBERT, chosen for its speed on CPU. All models were fine-tuned to classify text as "Complete" (turn over) or "Incomplete" (user still speaking).

- Model_General: Trained on PersonaChat and TURNS-2K (casual conversation).

- Model_Domain: Trained on the AIxBlock Call Center dataset (support calls).

- Model_Agent: Trained only on agent utterances.

- Model_Customer: Trained only on customer utterances.

To prevent memorization, we enforced a one-shot rule: every unique sentence appeared exactly once in the training set.

The Initial Results

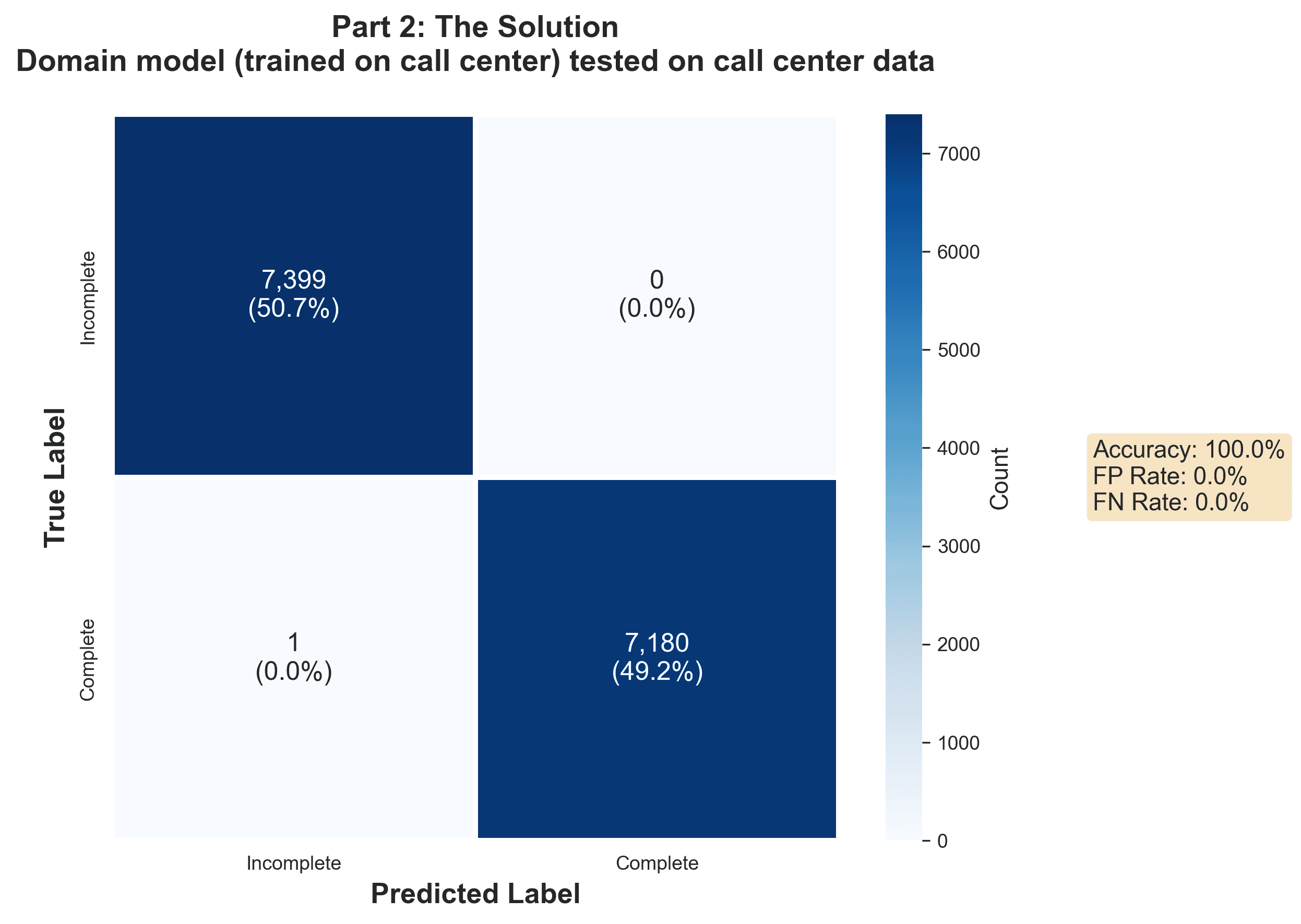

When we tested our domain-specific model on the call center test set, the results were literally perfect.

- Model_General: 87.5% accuracy

- Model_Domain: 100.0% accuracy

We had improved accuracy by 12.5 percentage points just by switching to domain-specific data. It seemed that "completeness" in a call center context was highly pattern-based, and our model had learned those patterns perfectly.

We were ready to declare victory. But 100% accuracy in machine learning is usually a red flag. It means you either leaked data into your test set, or your model found a cheat code.

Phase 2: The Punctuation Trap

We decided to stress-test the model by simulating a real-time stream. In a real voice bot, text arrives word-by-word from the Automatic Speech Recognition (ASR) system.

We fed the sentence "Is it a 250 or 350?" into the model one word at a time to see when it decided the turn was complete.

| Word(s) | General Model | Domain Model |

|---------------------|---------------|--------------|

| Is | Incomplete | Incomplete |

| Is it | Incomplete | Incomplete |

| Is it a | Incomplete | Incomplete |

| Is it a 250 | Incomplete | Incomplete |

| Is it a 250 or | Incomplete | Incomplete |

| Is it a 250 or 350? | Complete | Complete |

Both models held "Incomplete" steadily until the very last token. But look closely at that last token. It has a question mark.

We asked a simple question: What happens if the ASR system is slow to send punctuation?

We stripped the punctuation from the test set and re-ran the evaluation.

- Original:

"Is it a 250 or 350?"→ Complete - Normalized:

"is it a 250 or 350"→ Incomplete

The results were devastating. Our "perfect" domain model's predictions flipped in 67% of cases when punctuation was removed.

We hadn't built a semantic turn detector. We had built a punctuation detector.

The model had learned a simple rule:

- Ends with

.or?→ Complete - No punctuation → Incomplete

This is useless in production. ASR systems typically insert punctuation after they have determined a pause is significant. If our model waits for the punctuation to decide the turn is over, it's just echoing the ASR system's decision 50ms later. We aren't adding intelligence; we're adding latency.

Phase 3: Forcing Semantic Understanding

To build a model that actually understands when a sentence is grammatically or semantically complete, we had to blind it to the cheat codes.

We retrained all our models on normalized text: lowercase, no punctuation.

- Original:

"Yeah, that's fine." - Normalized:

"yeah thats fine"

If the model wanted to know if the sentence was done, it would have to learn that "that's fine" is a complete thought, whereas "that's fine if" is not.

The Real Results

On the normalized test set, the truth came out.

- General Normalized: 72.2% accuracy on call center data.

- Domain Normalized: 77.9% accuracy on call center data.

The 100% was a mirage. The real accuracy of semantic turn detection was roughly 78%.

However, this result was actually more exciting. It proved that models can learn turn completion from text alone, without relying on punctuation. The domain-specific training still provided a 5.7 percentage point lift (77.9% vs 72.2%).

We had traded fake perfection for real, robust utility. This model could now work on raw ASR streams and provide an independent signal about turn completion.

Phase 4: Operationalizing the Imperfect

So we have a model that is 78% accurate. Is that good enough?

In a 20-turn conversation, an 80% accuracy rate implies 4 errors. Those errors come in two flavors:

- Interruptions: Predicting "Complete" too early.

- Missed Turns: Predicting "Incomplete" when the user is done.

For customer service, interruptions are deadly. They make the bot feel aggressive and "broken." Missed turns just feel like lag.

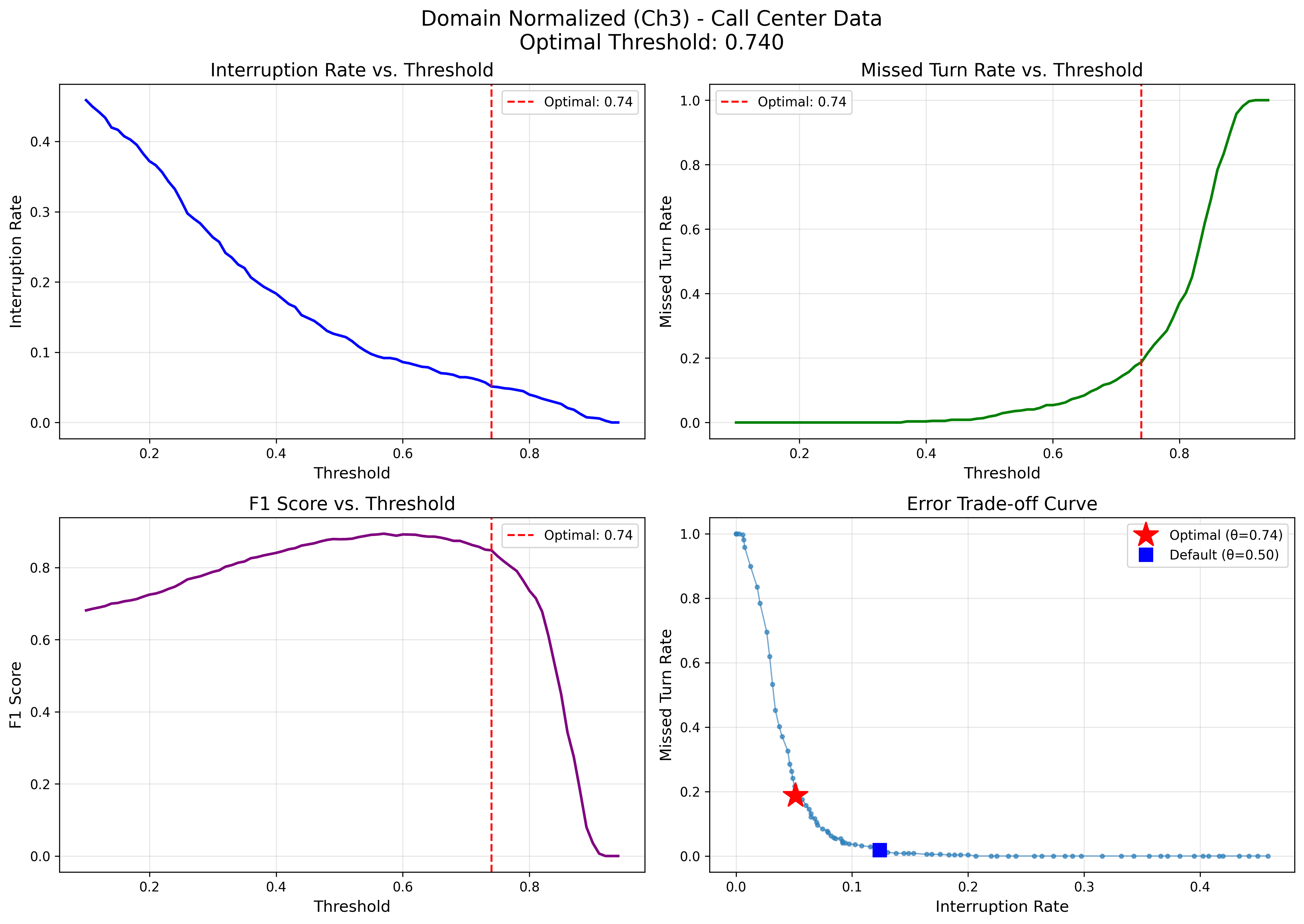

We analyzed the Interruption Rate (IR) of our new normalized model and found it was interrupting users in 22.4% of turns. That's way too high.

But we realized we could tune the decision threshold. By default, the model predicts "Complete" if confidence > 50%. By raising that bar, we can tell the model: "Only interrupt the user if you are really sure."

By raising the threshold to 0.74, we reduced the interruption rate from 12.4% to 5.1%. The trade-off was a higher Missed Turn Rate (18.7%), meaning the bot would sometimes wait a bit longer to confirm the user was done. For a polite support bot, this is the right trade-off.

A Note on Training Infrastructure

We approached this experiment with a hybrid workflow. For rapid debugging and code development, we ran training locally on MacBook Pros with M-series chips (Apple Silicon), which are surprisingly capable for fine-tuning smaller BERT models.

However, when it came time to run the full suite of experiments—training four model variants across multiple phases (Original vs. Normalized)—we offloaded the work to AWS SageMaker. By spinning up ml.g4dn.xlarge instances on demand, we could launch all experiments simultaneously and get results in under an hour, rather than waiting for days of sequential local training.

The codebase is designed to be infrastructure-agnostic. The training script relies on the standard Hugging Face Trainer API, so you can run the exact same code on your laptop or scale it up to the cloud if you don't have powerful local hardware.

The Lessons

Our journey from "100% accuracy" to "78% accuracy" taught us three things about AI development:

- Distrust Perfection: If a model scores 100%, it's probably cheating. In our case, it was overfitting to ASR punctuation artifacts.

- Test Like You Fly: Our initial test set had perfect punctuation. Real-time ASR streams don't. Testing on normalized text revealed the model's true capabilities.

- Metrics Aren't Operations: accuracy is a research metric. In production, you care about Interruption Rate vs. Latency. Threshold tuning allows you to convert a "mediocre" model into a highly effective product by choosing the right errors to minimize.

You can replicate our results, download the datasets, and see the full code in our GitHub repository.