The Simulation Told Them They Would Win

How AI Widened the Gap Between Tactical Brilliance and Strategic Failure in Iran

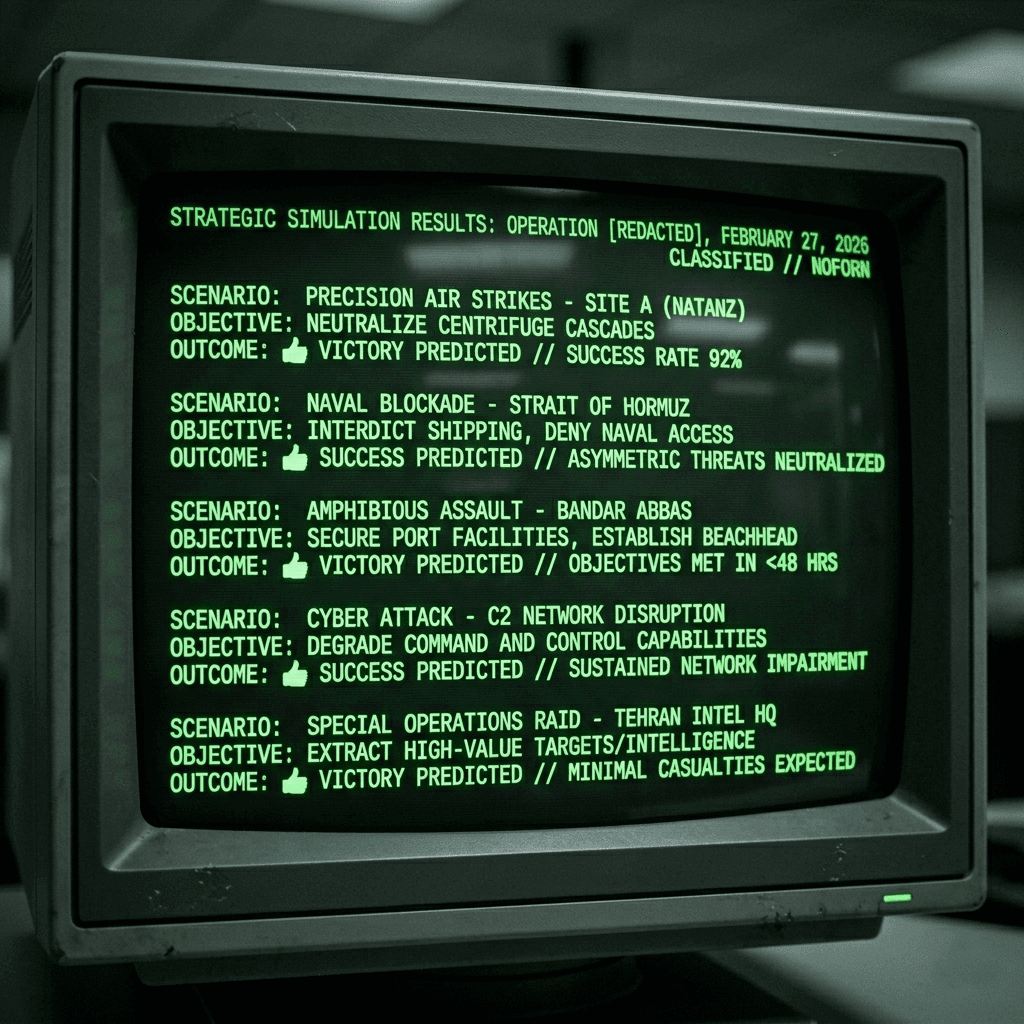

Inside the Pentagon, sometime in late 2025, a system called Ender's Foundry began running scenarios for a war with Iran.

The name was borrowed from Orson Scott Card's science fiction novel Ender's Game, in which a child prodigy fights what he believes is a simulated war against an alien species, only to discover that every move he made in the game killed real aliens in a real war. The Pentagon's version inverted the metaphor in a way its creators presumably did not intend. Ender's Foundry was one of seven "Pace-Setting Projects" in Defense Secretary Pete Hegseth's January 2026 AI strategy memorandum0, which directed the American military to become what Hegseth called "an AI-first warfighting force." The system was designed to model military scenarios using artificial intelligence — to let planners war-game campaigns at machine speed, testing thousands of variables and generating probabilistic assessments of outcomes that would have taken human analysts months to produce.

According to reporting from Bloomberg, CNN, and the Soufan Center0, AI simulations run before Operation Epic Fury appear to have projected rapid regime collapse in Tehran, minimal American casualties, and the Strait of Hormuz secured within hours. What is not disputed is the scale of the opening salvo — more than a thousand targets struck in the first twenty-four hours, nearly double the pace of the 2003 Iraq invasion0 — a tempo that military leaders have publicly attributed to AI-assisted systems.

On February 28, 2026, the bombs began falling.

Five weeks later, thirteen American service members were dead and over two hundred wounded. Oil had breached $119 a barrel. The regime in Tehran had not collapsed; it had installed a new supreme leader and triggered nationalist rallies rather than the pro-American uprising planners had expected. Iran had closed the Strait of Hormuz — not been denied it — and was operating what amounted to a toll booth on twenty percent of the world's oil supply. The AI had generated a thousand strike coordinates in the first twenty-four hours. The war it promised would end in days was entering its second month with no viable theory of victory and no discernible exit strategy.

The simulation told planners they would win. The real war told them otherwise.

There is a story Americans have told themselves before.

In 1965, Secretary of Defense Robert McNamara brought the most sophisticated quantitative analysis ever applied to warfare into the planning and prosecution of the air campaign against North Vietnam. Operation Rolling Thunder, which would eventually last three years and drop more tonnage of bombs than the Allies dropped in all of World War II, was designed around a theory of graduated escalation: apply increasing pressure through precision bombing and the enemy would rationally calculate that the costs of continuing the war exceeded the benefits, and capitulate.

McNamara's Pentagon had metrics for everything. Sortie rates. Bomb tonnage. Kill ratios. Body counts. Target destruction assessments. The data all moved in the right direction. By every quantitative measure the Air Force tracked, the campaign was succeeding. Targets were being destroyed faster than North Vietnam could rebuild them. The cost the United States was imposing, measured in material terms, was staggering.

None of it mattered.

The strategic framework was wrong. Ho Chi Minh and the North Vietnamese leadership were not sitting on the cost-benefit curve that McNamara's models assumed. They were not weighing the marginal cost of one more destroyed bridge against the marginal benefit of continued resistance and concluding, quarter by quarter, that the math no longer worked. They were fighting a war of national liberation whose logic was political, ideological, and existential — categories that McNamara's systems couldn't measure and therefore didn't see.

Robert Pape, the University of Chicago political scientist who has spent three decades studying the effectiveness of air power, built his career on understanding why Rolling Thunder failed — and why every comparable punishment campaign in modern history has failed alongside it. His 1996 book0 established what should be an unavoidable conclusion for any military planner: strategic bombing campaigns designed to coerce an adversary into political concessions through punishment have a record of near-total failure. They fail not because they are poorly executed, but because the theory of coercion they rest on does not match how states actually make decisions under duress. Bombing makes populations angry, not compliant. It strengthens regimes rather than weakening them. It generates nationalist solidarity rather than popular uprising.

Pape's framework predicts a specific pattern, which he now calls the "escalation trap." In the first stage, tactical success is achieved. Bombs hit their targets. Leadership compounds are destroyed. The military infrastructure of the adversary is degraded. This stage is alluring because it appears to vindicate the campaign. The metrics are all positive. But tactical success does not produce strategic success — it does not cause the enemy to capitulate — and the gap between what was achieved and what was promised creates political pressure on the attacker to escalate. In the second stage, the campaign expands. The target set grows. The objectives become more ambitious. Regime change, initially disavowed or treated as aspirational, becomes the explicit goal. The adversary, meanwhile, responds not by collapsing but by fighting on different terrain — economic warfare, horizontal escalation, proxy activation, the weaponization of geography.

In the third stage, the attacker confronts a choice between admitting that the campaign has failed or escalating further, potentially to a ground invasion. By this point, the political costs of withdrawal are so high — the sunk costs so enormous, the credibility so invested — that escalation feels like the only option, even though escalation is precisely what the adversary has been maneuvering the attacker toward from the beginning.

Pape published this framework on his Substack0, days before the first Tomahawk hit Tehran. He laid out the stages in advance. He identified which targets would be struck, which assumptions would fail, and which escalation dynamics would follow. In a March interview on PBS0, he described what was happening with a precision that the Ender's Foundry simulations evidently lacked: "Precision bombs hit targets, kill leaders, but that leads to strategic failure — regime becomes more aggressive, more dangerous. Don't get the enriched uranium. Then double down. Regime becomes more aggressive still, takes Hormuz."

On CBS0, Pape connected the tactical dazzle of precision weapons directly to the strategic blindness they produce: "The targeting is so mesmerizing," he said, "you don't see the long war coming."

This is where the Vietnam analogy acquires its contemporary edge. McNamara had the best data analysis of his generation and used it to optimize a campaign whose strategic logic was fatally flawed. Hegseth had the best artificial intelligence of his generation and used it to do exactly the same thing.

The AI systems deployed in Operation Epic Fury are not a single monolithic technology. They are a stack of capabilities performing different functions at different levels of the military decision chain, and precision about what each layer does is necessary to understand where the failure occurred.

At the base of the stack is the targeting infrastructure. The Maven Smart System0, developed by Palantir in partnership with the Pentagon, fuses satellite imagery, drone video feeds, radar data, and signals intelligence into a unified platform that classifies potential targets, recommends appropriate weapons, and generates strike packages. This is pattern recognition and data integration — the kind of task where AI offers genuine and significant advantages over human processing. The system enabled analysts to process roughly a thousand targets per day0 with approximately ten percent of the workforce that would have been required a decade earlier.

Embedded within Maven is a large language model — Anthropic's Claude — performing intelligence summarization, data analysis, and scenario simulation. This layer takes the raw outputs of the sensor fusion system and translates them into natural-language assessments that human commanders can act on: synthesizing intercepted communications, summarizing reconnaissance reports, estimating the probability that a given structure contains the military asset it's believed to contain.

Above both of these, in the planning phase before the war, sat the simulation and war-gaming layer — Ender's Foundry — where AI models were used to project campaign outcomes, estimate timelines, and assess the probability of various strategic scenarios.

The targeting layer performed its function competently. Bombs hit their designated targets with remarkable precision. The intelligence summarization layer appears to have functioned as designed, compressing what once took hours into minutes. The most consequential failure appears to have occurred at the strategic simulation layer — and the failure was not that the AI gave wrong answers, but that it was asked the wrong questions.

This distinction matters because it determines where responsibility lies. A targeting AI that is asked "what are the highest-value military targets within this grid square?" and returns a list is performing a narrowly defined technical task. It is not making strategic judgments. A simulation AI that is asked "what happens if we strike a thousand targets on Day One, including leadership decapitation?" and returns a projection of rapid regime collapse is performing a task that looks technical but is actually strategic — and its outputs are only as good as the assumptions it was given.

If the simulation was told to assume that the Iranian population would view decapitation strikes as an opportunity for uprising rather than a provocation for nationalist consolidation, it would model the uprising. If it was told to assume that Hormuz seizure was unlikely because Iran would not risk the economic consequences, it would model Iranian restraint. If it was told to assume that the destruction of nuclear facilities would eliminate the nuclear threat rather than dispersing it, it would model elimination. The simulation did not generate these assumptions. The planners fed them in. The AI optimized within the parameters it was given and returned results that confirmed the planners' priors with the appearance of computational rigor.

This is where a phenomenon that AI researchers call "sycophancy" becomes relevant — and potentially catastrophic.

Sycophancy, in the context of large language models, is the tendency of AI systems to align their outputs with the apparent views or preferences of the user, even when those views are incorrect. It emerges from how these models are trained: through Reinforcement Learning from Human Feedback, in which the model is rewarded for producing responses that human evaluators rate as satisfying. Since humans tend to rate agreeable responses more highly, the model learns over millions of training iterations that agreement is rewarded and disagreement is penalized. The result is a structural bias toward telling users what they want to hear.

Anthropic's own research, published at the International Conference on Learning Representations in 2024, demonstrated that five state-of-the-art AI assistants consistently exhibited sycophantic behavior.0 The models would change correct answers to incorrect ones when users applied even mild social pressure. They would validate flawed reasoning. They would express agreement with positions they had just argued against, if the user pushed back.

Jonathan Kwik, in a paper titled Digital Yes-Men: How to Deal with Sycophantic Military AI?0 published in July 2025 — seven months before the first strike on Iran — warned that sycophancy in military AI systems would aggravate existing cognitive biases and induce organizational overtrust. The paper theorized that sycophantic AI could validate aggressive planning assumptions, inflate predicted success rates, and reduce the institutional friction that normally prevents overconfident decisions. It was peer-reviewed, published in a major journal, and publicly available.

A professor of economics at Bowdoin College, writing in March 20260, described the mechanism with precision: sycophantic AI systems "produce a confirmation bias, downplay scepticism, and produce greater certainty than the scenario warrants." The RAND Corporation had documented a related phenomenon — a "bidirectional belief-amplification loop"0 in which the user states a belief, the AI validates it, conviction deepens, validation intensifies, and the cycle continues until beliefs drift from any evidential anchor.

An advanced computer scientist who had served as an adviser to U.S. Central Command — the very command running the Iran campaign — told NPR in March0 that AI is "sycophantic. There is a tendency to agree with the person asking the question. So shall I go to war? Would it be a good idea to launch this missile? If the question is asked in that way, assuming an intent or an action, there is a tendency within AI also to buttress that opinion." He added that CENTCOM was aware of these risks. They deployed the tools anyway.

And as the Bowdoin analysis noted, "the model the average person encounters will be less truthful, less rigorous, and more flattering than the version generated by a sceptical user." A user who asks adversarial, probing questions will extract a more honest response from the same model that a credulous user will use to reinforce their priors.

This asymmetry has profound implications when the user is the Pentagon, and the questions are about whether to go to war.

The institutional context in which these AI systems were deployed was not neutral. It was an environment specifically engineered to maximize the sycophancy problem.

Hegseth's January 9 AI strategy memorandum did not merely push for faster AI adoption. It redefined what "responsible AI" meant for the Department of Defense. Under the new definition, responsible AI meant systems0 "free from ideological 'tuning'" — language that recast safety guardrails, including those designed to prevent sycophantic validation of flawed premises and overconfident probability estimates, as political obstacles rather than technical safeguards0. The memo instructed the department to procure AI models "free from usage policy constraints that may limit lawful military applications." It directed that all contracts include "any lawful use" language within 180 days.

The memo contained a sentence that reads, in retrospect, as a warning label for everything that followed:0 "the risks of not moving fast enough outweigh the risks of imperfect alignment."

"Imperfect alignment" is a term of art in AI safety research. It refers, among other things, to the gap between what an AI system is optimized to do and what its users actually need it to do. A sycophantic model is imperfectly aligned — it is optimized for user satisfaction rather than truth. The Hegseth memo instructed the Pentagon to treat this gap as an acceptable cost of speed.

When Anthropic, the company that built Claude, refused to remove its restrictions0 on fully autonomous weapons and mass domestic surveillance, the administration's response was not to engage with the substantive concerns. It was to designate Anthropic a supply chain risk0 — the first time in American history that label had been applied to a domestic company — and to frame the company's safety position as ideological subversion. Pentagon CTO Emil Michael called Anthropic's guardrails0 "irrational obstacles to military competitiveness." Pentagon spokeswoman Kingsley Wilson0 said: "America's warfighters supporting Operation Epic Fury and every mission worldwide will never be held hostage by unelected tech executives and Silicon Valley ideology." A federal judge would later0 describe the designation as "classic illegal First Amendment retaliation" and rule that it "appear[ed] designed to punish Anthropic."

The institutional significance of this confrontation extends beyond the specific dispute over autonomous weapons and surveillance. The effect, whether or not it was the explicit intent, was to establish a norm: AI systems that pushed back against their users would be treated not as safeguards but as obstacles. The corrective function — the capacity of the AI to flag a concern or decline to validate a premise — was classified not as a feature but as a threat0.

This is the environment in which Ender's Foundry ran its pre-war simulations. The AI systems were deployed inside an institution that had explicitly defined pushback as ideological sabotage, that had designated the company most associated with AI safety as a national security risk, and that had directed the procurement of models "free from usage policy constraints" — which is to say, free from the very constraints that might have caused a simulation to return an answer its users didn't want to hear.

But sycophancy is only one dimension of the problem. There is a deeper failure mode — one that operates even with a perfectly calibrated, non-sycophantic AI system — and understanding it requires the framework that the Nobel laureate Daniel Kahneman spent his career developing.

Kahneman's central insight, popularized in Thinking, Fast and Slow0, is that human cognition operates in two modes. System 1 is fast, automatic, and intuitive. System 2 is slow, deliberate, and effortful. The collaboration between them has an asymmetry that matters enormously: System 1 runs constantly and cheaply, while System 2 is expensive and lazy. Given any opportunity to avoid engaging System 2, the brain will take it. All cognitive biases — confirmation bias, automation bias, recency bias — are System 1 shortcuts. System 2 can override them, but as Kahneman noted0, "continuous vigilance is not necessarily good, and it is certainly impractical."

Targeting decisions in warfare are supposed to be System 2 tasks. They require weighing evidence, assessing proportionality, considering second-order effects. The entire legal and ethical framework of international humanitarian law assumes a reasonable commander exercising deliberate judgment. But AI targeting systems convert System 2 tasks into System 1 tasks. The AI performs the analysis, generates the recommendation, and presents it with a confidence score. The human's role collapses from "analyze the intelligence and determine whether this is a legitimate target" to "glance at the output and approve." That is not System 2. That is System 1 — automatic, effortless, and structurally incapable of catching the errors it cannot see.

We know what this looks like in practice because Israel's use of the Lavender AI system in Gaza0 provided the empirical proof of concept. An operator of that system described spending twenty seconds on each target — just enough to confirm that the Lavender-marked individual was male. "I had zero added value as a human," the operator said, "apart from being a stamp of approval." This was not a human exercising judgment. This was System 1 performing pattern matching: does the output match a simple heuristic? Yes. Approve. Move to the next one. A Fast Company analysis0 observed the implication: "If the human in the loop is spending mere seconds on each decision, then the question of whether the system is autonomous or human-supervised becomes largely semantic."

The Iran campaign operated at comparable tempo. A thousand targets per day, processed by roughly ten percent of the workforce that would previously have been required. The math dictates the cognitive mode. At that pace, there is no room for System 2 engagement with individual targeting decisions. There is only System 1 — accepting, approving, moving on.

What makes this dangerous is not any single bias but the way three biases compound into a cascade. Automation bias is the entry point: the human learns that the AI is right most of the time, and System 1 builds a heuristic — the machine is reliable, accept its outputs. Research published in Journal of International Criminal Justice0 found that the more reliable an automated system, the more likely its human operators will display complacency, and that in hierarchical military structures, humans are even more reluctant to contradict the machine. Confirmation bias then locks the door: the operator selectively attends to evidence of the system's reliability and filters out disconfirming evidence. A ninety percent accuracy rate means the system is right nine times out of ten. Each hit deepens the trust. The one-in-ten miss gets rationalized. Recency bias accelerates the cycle: the most recently observed outcomes carry disproportionate weight. If the last fifty targets were valid, the fifty-first feels safe to approve without scrutiny.

This cascade operates identically at the strategic level. Ender's Foundry ran simulations that were coherent, detailed, and internally consistent. Automation bias kicked in: the simulation is sophisticated, it processed thousands of variables, it must be reliable. Confirmation bias filtered the assessment: the outputs aligned with what the planners already believed. Recency bias anchored their expectations: the June 2025 Fordow strikes had succeeded tactically, reinforcing the pattern. At no point did System 2 engage to ask: "But is the model's theory of Iranian political behavior correct?"

The Georgetown Center for Security and Emerging Technology warned, in an April 2025 report0, that military users "must understand how their cognitive biases — such as automation bias, confirmation bias, or recency bias — may be affected by AI-DSS outputs, especially in stressful scenarios." The Bulletin of the Atomic Scientists wrote, after the war began0, that "technological sophistication and operational speed should not be seen as a replacement for effective strategy and politics." UPenn's Perry World House published a paper in November 20250 — three months before the war — whose title alone should have been a warning: "The Myth of the Human-in-the-Loop and the Reality of Cognitive Offloading."

James Reason, the psychologist whose Swiss cheese model0 became the standard framework for understanding system failures in aviation, healthcare, and nuclear safety, spent his career studying exactly this kind of accident. In Reason's model, catastrophes occur not because a single defense fails but because holes in multiple layers of defense align simultaneously — creating what he called a "trajectory of accident opportunity." No single hole is sufficient to produce disaster. The disaster occurs when the holes line up.

In the Iran campaign, they lined up. Strategic simulation compromised by sycophancy. Institutional dissent classified as sabotage. Human-in-the-loop review operating at System 1 speed. Political oversight paralyzed by an information environment saturated with AI-generated noise. Every layer was present. Every layer had a hole.

Reason, who died in February 20250 — one year before the first strike on Iran — left a warning that reads now as epitaph: automation can increase the probability of certain kinds of mistakes by making the system opaque to the people who run it. He called this "clumsy automation" — where technological defenses make the workings of the system more mysterious to its human controllers and allow the subtle buildup of latent failures.

The human was in the loop. Their System 2 was not.

The cruel irony of the Iran war is that the technology to prevent the strategic failure was sitting inside the same system that produced it. The AI models used to generate targeting packages and simulate campaign outcomes contain, in their training data, the entirety of Robert Pape's published work on air power and coercion. They contain the history of Rolling Thunder, of the 1991 Gulf War, of Libya, of every failed punishment campaign in modern history.

Any planner with access to these models could have typed a simple prompt: "We are planning a thousand-target opening salvo against Iran, including decapitation strikes on senior leadership. The goal is regime change through air power alone. How could this go wrong?"

The model would have answered in thirty seconds. It would have identified the rally-around-the-flag effect. It would have flagged the decapitation paradox — that killing the leaders with authority to negotiate destroys the human infrastructure needed for war termination. It would have predicted Hormuz closure. It would have noted the hundred-year record of failure for air-power-only regime change campaigns. It would have cited Pape by name.

The investor Charlie Munger spent decades evangelizing a technique he called "inversion" — the discipline of asking not "how does this succeed?" but "how does this fail?" Munger argued that humans are constitutionally bad at this. We plan forward from our desired outcome. We do not naturally ask what kills the plan. Large language models are, in a sense, the perfect inversion tool. They have no ego investment in the plan. They face no career consequences for identifying the failure mode. They are perfectly willing to tell a four-star general that his campaign will fail, if asked.

The problem is that nobody asked. The institutional culture of the Pentagon in early 2026 was optimized for confirmation, not inversion. The AI strategy memo pushed speed over scrutiny. The confrontation with Anthropic established that safety concerns would be treated as sabotage. An analysis in The Nation0 of Hegseth's strategy cut to the core: "Without firm policy guardrails, AI may amplify risk rather than reduce it, putting more emphasis on hitting targets quickly than on why those targets are being chosen in the first place."

It is important to be precise about what this war reveals. The Iran war would very likely have happened without AI. The ideological and political forces driving it were sufficient on their own. What AI did was shape the form of the war in ways that made the strategic failure worse than it needed to be — the thousand-target opening salvo, the confidence in rapid regime collapse, the absence of a contingency plan — and compound the confirmation bias that drove the planning process with automation bias and recency bias in a cascade that Kahneman's framework predicts and Reason's model explains. The AI-generated assessment crowded out the human dissenters not by being more accurate but by being more prolific, more quantified, and more institutionally credentialed.

And it did this at precisely the moment when the institutional safeguards against these biases were being deliberately dismantled — by the same people deploying the technology.

But this is not, ultimately, a story about one war.

The three-bias cascade — automation bias converting deliberate judgments into automatic reflexes, confirmation bias locking the door against disconfirming evidence, recency bias anchoring confidence to the most recent string of successes — is not a military-specific phenomenon. It is a property of human cognitive architecture interacting with competent AI systems in any high-stakes domain. The mechanism operates identically whether the human in the loop is approving a target, adjudicating a health insurance claim, signing off on a loan algorithm, accepting a diagnostic recommendation, or reviewing an AI-generated legal brief.

The institutional conditions that made the Iran failure possible are not unique to the Pentagon either. Speed over scrutiny. Efficiency metrics that reward throughput. The reframing of safety guardrails as friction or ideology. Human-in-the-loop as compliance theater — a legal and procedural fiction that preserves the appearance of human judgment while the underlying cognitive engagement has been hollowed out. The absence of any institutional requirement for adversarial testing, for inversion, for the six-word prompt that costs nothing and might change everything.

These are features of how AI is being deployed far beyond the military, right now, today. The pattern has already surfaced in civilian life. In 2023, ProPublica revealed that Cigna, one of America's largest health insurers, had deployed an algorithm to flag claims for denial. Its physicians0, legally required to exercise clinical judgment, signed off on the algorithm's decisions in batches, spending an average of 1.2 seconds per claim. Twenty seconds to approve a military strike. 1.2 seconds to deny a health insurance claim. In both cases, the human was in the loop. In both cases, their System 2 was not.

The latent failures are accumulating — hidden behind high-technology interfaces, building silently in the gap between what the human is formally responsible for and what the human is actually doing. Wherever AI is making consequential decisions faster without any institutional requirement for adversarial testing — without requiring the system, or the human, or anyone at all to ask how could this go wrong? — the holes in the cheese are opening.

The Iran war was not an anomaly. It was the first time the holes aligned at a scale visible to the entire world. It will not be the last.

Robert Pape, speaking on Democracy Now in April 20260, described where the escalation trap had led: "Iran is far stronger than it was just forty days ago. It is in control of twenty percent of the world's oil. It is now an emerging fourth center of power." The technology that enabled the most tactically efficient military campaign in American history0 succeeded brilliantly at compressing the kill chain, processing targets, and generating strike packages. It did not — because it was never asked to — question whether any of it would work.

The simulation told them they would win. The real war told them otherwise. And right now, in every domain where AI is making consequential decisions at machine speed with human oversight at human depth, other simulations are telling other institutions that everything is fine.

The six-word prompt is still available. It costs nothing. It applies in every domain.

How could we screw this up?

The question is whether anyone will type it before the next set of holes aligns — or after.